Hi, we're new here.

We want to make a newsfeed with just news. No bots, no baby pictures, and no weird uncles sharing questionable articles. Just what's happening in the world.

Be sure to sign up or sign in, so you can choose what sources and topics you see in your news feed.

Please take a look around and let us know what you think.

A DAY AGO

A DAY AGO

What Is Artemis III? What to Know About NASA’s Latest Space Mission.

Artemis III is the third in a series of missions that gets humans closer to returning to the surface of the moon.

A DAY AGO

A DAY AGO

A Surprising Find in Ancient Squirrel Poop: Woolly Mammoth Meat

In a new study, fossilized droppings suggested that ancient ground squirrels ate the meat of much larger animals, including mammoths, bison and saber-toothed cats.

A DAY AGO

A DAY AGO

Can NASA Really Land Astronauts on the Moon by 2028?

Experts have been hopeful, but say the agency’s lunar aspirations are largely at the whims of two billionaires, Elon Musk and Jeff Bezos.

A DAY AGO

A DAY AGO

How Will the Artemis III Astronauts Train?

The Artemis III astronauts who were announced today will have had less mission training time than their Artemis II counterparts.

- SCIENTIFIC AMERICAN21 HOURS AGO

How the new FDA-approved ingredient bemotrizinol enhances sunscreen protection

Dermatologists and skincare aficionados are excited for the U.S. to finally get a new, more protective sunscreen filter after more than 20 years of regulatory roadblocks. Here's how bemotrizinol works

- SCIENTIFIC AMERICAN8 HOURS AGO

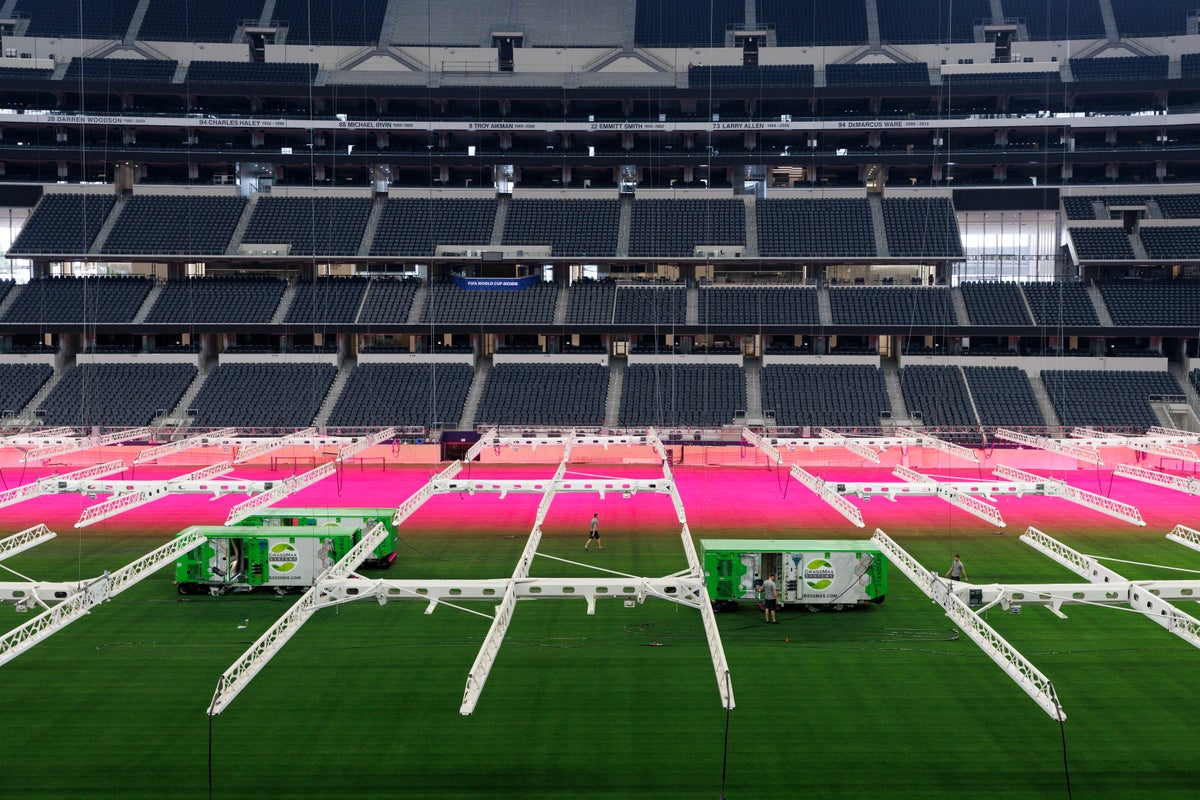

The surprising science behind the 2026 World Cup grass

How scientists are engineering the perfect World Cup pitch—one so flawless that players never notice it

- SCIENTIFIC AMERICAN8 HOURS AGO

The World Cup could be a petri dish for disease. Wastewater could sound the alarm

As millions of soccer fans pack FIFA World Cup venues, public health scientists created a wastewater monitoring network to forecast potential disease threats—from measles to Ebola

- SCIENTIFIC AMERICAN7 HOURS AGO

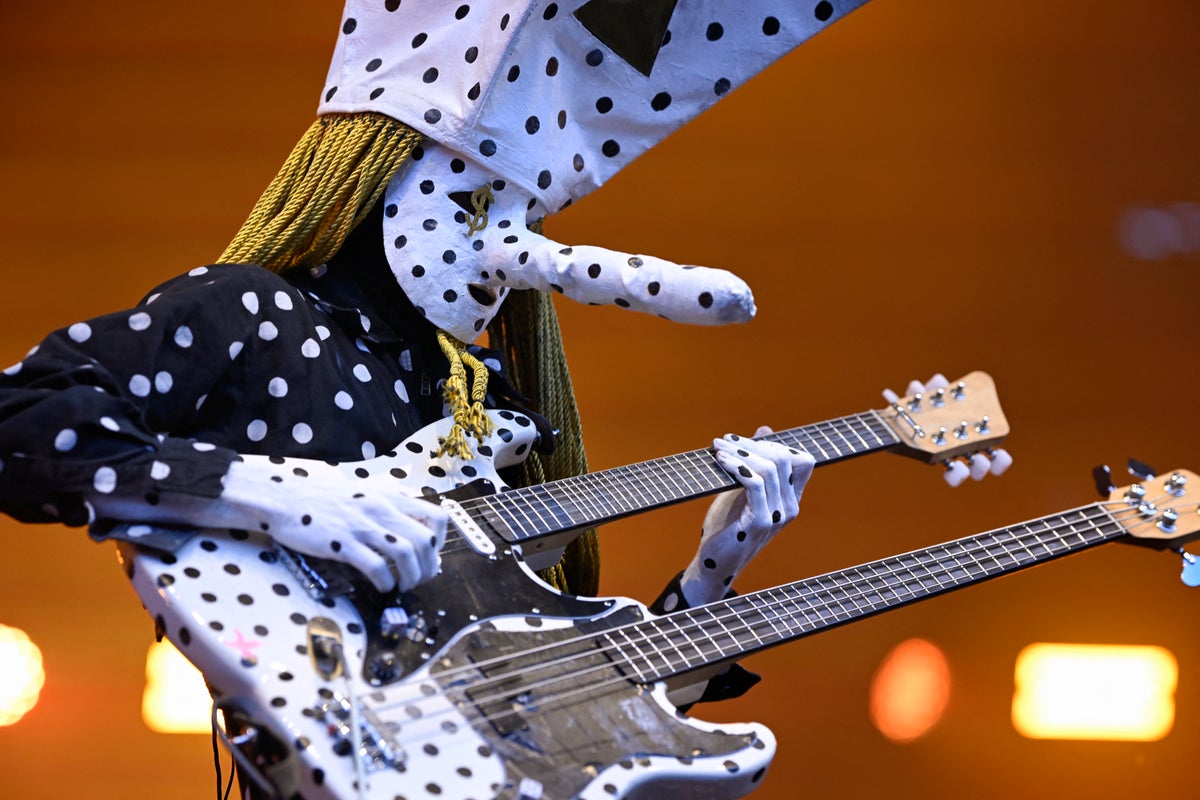

How Canadian rock duo Angine de Poitrine play with neurobiology and physics to make viral music

Angine de Poitrine don't abide by the usual rules of Western music, using their own custom-built guitar to strike notes that shouldn't exist

- SCIENTIFIC AMERICAN4 HOURS AGO

How FIFA is engineering natural grass for the 2026 World Cup

FIFA is building temporary natural-grass fields meant to play consistently across 16 stadiums in three countries

- SCIENTIFIC AMERICAN5 HOURS AGO

Cats, unlike dogs and toddlers, help you only when it helps them

Dogs spontaneously aid struggling humans the way young children do—whereas cats wait until they stand to benefit

- SCIENTIFIC AMERICAN3 HOURS AGO

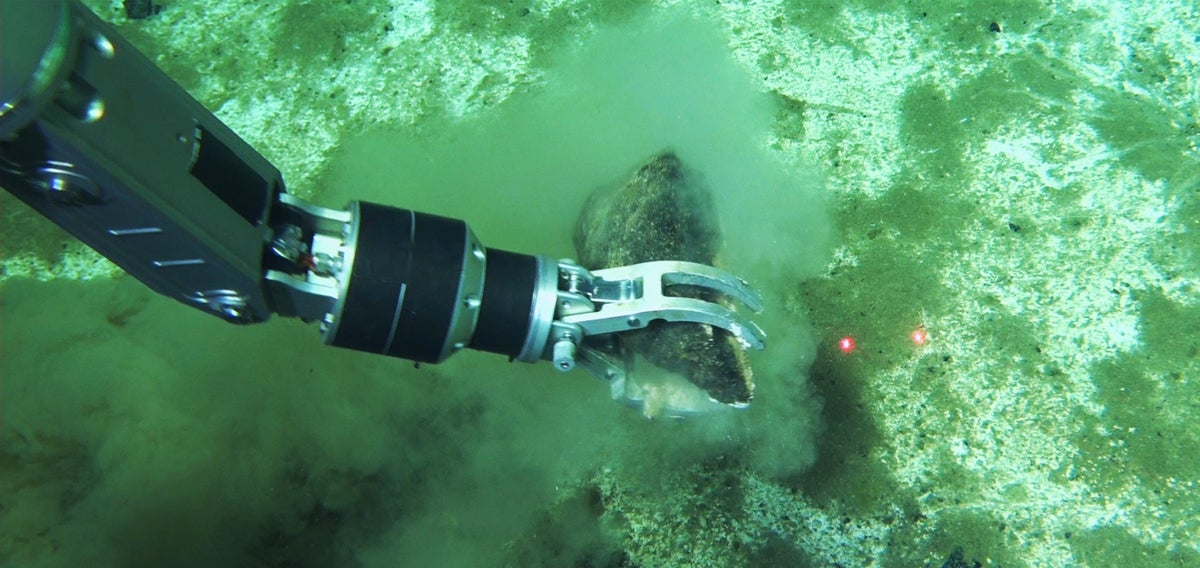

Largest whale ‘graveyard’ discovered, with skeletons spanning 5 million years

The fossilized remains of more than 450 whales have amassed along a 750-mile-long stretch of the Indian Ocean floor

3 HOURS AGO

3 HOURS AGO

Indonesia Landslides Devastated Endangered Orangutans, Study Finds

More than 5 percent of the species is estimated to have been lost when a climate-fueled storm unleashed torrents of water, mud and debris.

- SCIENTIFIC AMERICAN2 HOURS AGO

How to build kids’ ‘cognitive endurance’ in an age of distraction

The ability to run "mental marathons" is a skill children can learn through simple, but dedicated, practice

- SCIENTIFIC AMERICAN2 HOURS AGO

How to tell if your dog is left-pawed or right-pawed, according to science

A step-by-step guide to the "Doginburgh Inventory," a new pawedness test developed by dog behavior researchers

AN HOUR AGO

AN HOUR AGO

NASA Crew-12 Commander Captures Snaky Southern Lights From Space Station

The footage of the aurora over Earth’s Southern Hemisphere was shared on Sunday by Jessica Meir, commander of NASA’s Crew-12 mission.

Want to choose which sources and topics you see?